If you’ve used ChatGPT, then you know it’s like the Shakespeare of AI, spinning out text that’s so human-like that it might just write the next great American novel. It has come a long way in a shockingly short amount of time thanks to advancements made to the so-called InstructGPT language models.

InstructGPT is an advanced AI-powered language model developed by OpenAI that’s designed to follow instructions given in a text prompt. It represents a significant evolution from previous models like GPT-3 and offers enhanced capabilities in understanding and generating text, making it a powerful tool for a wide range of applications, from customer service to content creation and beyond.

This article will delve into the intricacies of InstructGPT, exploring its capabilities, applications, and the impact it’s having on AI research and development. We’ll also discuss the ethical considerations and challenges that come with such advanced AI technology.

Let’s dive in!

What is InstructGPT?

InstructGPT is a term coined by OpenAI that refers to language models trained with human feedback to take the capabilities of previous GPT models and push them to new heights.

GPT stands for “Generative Pretrained Transformer.” It’s a type of language prediction model developed by OpenAI that is:

- “Generative” because it can generate text.

- “Pretrained” because it is trained on a large corpus of training data before receiving supervised fine-tuning from human labelers.

- “Transformer” refers to the type of neural network architecture it uses to understand the context of words in text.

At its core, Instruct GPT operates on the same fundamental principle as other GPT language models: it’s trained on a vast amount of text data and uses this training to generate text based on the input it receives.

However, what sets InstructGPT models apart is their ability to follow instructions given in a text prompt. This is a significant advancement over previous models, which were primarily focused on predicting the next word in a sentence.

InstructGPT is trained using Reinforcement Learning from Human Feedback (RLHF), a method that involves an iterative process of fine-tuning the model based on feedback from human evaluators.

This allows the model to improve over time, learning to generate better responses and follow instructions more accurately. InstructGPT outputs are also better at understanding human intention and less prone to toxic language.

In the next section, we’ll go over the evolution of AI-power language models developed by OpenAI.

Evolution of AI-Powered Language Models

The journey of AI-powered language models has been a thrilling ride, with each new model bringing us closer to the goal of creating AI that can truly understand and generate human-like text.

Let’s take a walk down memory lane and see how these models have evolved over the years:

1. GPT-1 (2018): The first in the line of Generative Pre-trained Transformers, GPT-1 was a big step forward. Trained on a large chunk of internet text, it could generate sentences that made sense and were relevant to the context. But, it was still a bit of a novice when it came to understanding complex instructions or keeping the story straight over longer texts.

2. GPT-2 (2019): GPT-2 was like GPT-1 after a serious workout. It was trained on a much bigger dataset and had a larger model size, which meant it could generate text that was more coherent and nuanced. It could write essays, answer questions, and even dabble in language translation. But, like its predecessor, it still had a hard time understanding complex instructions and keeping its story straight over really long texts.

3. GPT-3 (2020): GPT-3 was the superstar of the family. With 175 billion parameters, it was capable of generating impressively human-like text. It could write essays, answer complex questions, translate languages, and even write code. But even this superstar had its weak spots. It could sometimes generate incorrect responses or potentially harmful outputs using toxic language, and it didn’t always handle sensitive topics appropriately.

4. InstructGPT (2023): The latest prodigy, InstructGPT, took the capabilities of GPT-3 and kicked them up a notch. It was trained through supervised learning to capture human intentions and follow instructions in a text prompt, making it a powerful tool for a wide range of applications. But like its older siblings, it’s not perfect and can sometimes produce incorrect or nonsensical responses that don’t align with human intent or desired behavior.

From GPT-1 to InstructGPT, each stage of this evolution has brought us closer to the goal of creating general-purpose AI systems that can truly understand and generate human-like text.

InstructGPT models are the first to utilize OpenAI’s cutting-edge alignment research. A key motivation for the research was aligning language models to increase their truthfulness and helpfulness while mitigating their harms and biases.

How InstructGPT Models Compare to GPT-3

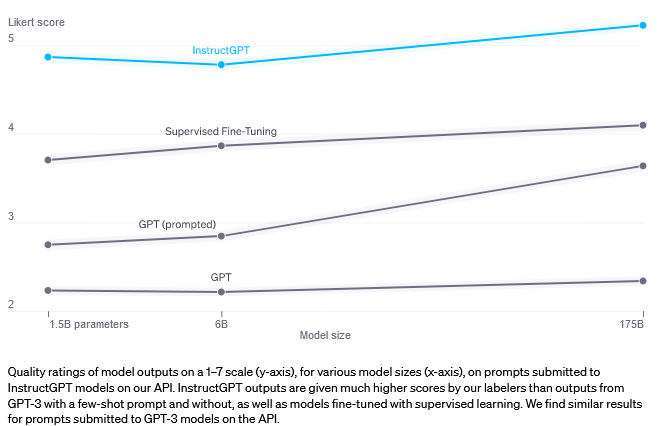

When compared to its predecessor, GPT-3, InstructGPT offers several key improvements, particularly when it comes to generating outputs that are truthful and unbiased.

GPT-3 large language models can be prompted to perform natural language tasks. However, these models can sometimes generate outputs that are untruthful, toxic, or harmful.

This is partly because GPT-3 was trained to predict the next word using public NLP datasets, rather than to safely perform the language task in a way that aligns with the user’s intent. In other words, GPT models aren’t fully aligned with their users.

To make the models safer, more helpful, and more aligned, OpenAI used reinforcement learning from human feedback. Human labelers provide demonstrations of the desired model behavior and rank several model outputs.

They then use this data for supervised fine-tuning and aligning language models. The result is models that are much better at following instructions than GPT-3. They also make up facts less often and generate outputs that are less toxic.

InstructGPT models, which have been in beta on the application programming interface (API) for more than a year, are now the default language models accessible on the OpenAI API. They represent cutting-edge AI-powered language models.

So, let’s go over how you can access InstructGPT through the OpenAI API in the next section.

[wpforms id=”211279″]

Accessing InstructGPT via the OpenAI API

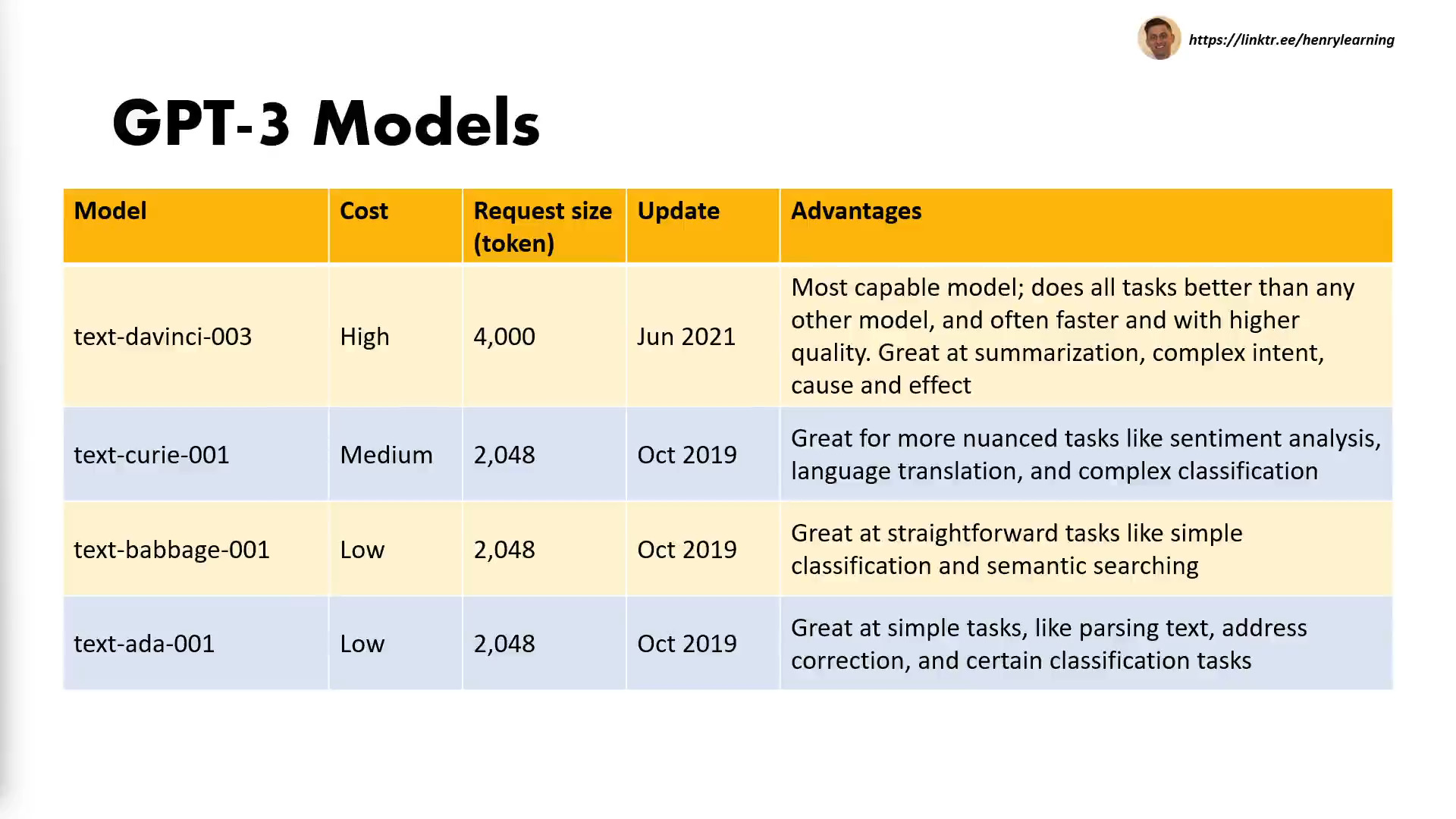

Instruct GPT can be accessed through the OpenAI API, providing developers with a powerful language model for a variety of tasks.

The API offers a diverse set of models with different capabilities, including the Instruct GPT model fine-tuned with human feedback.

To access InstructGPT via the OpenAI API, developers need to:

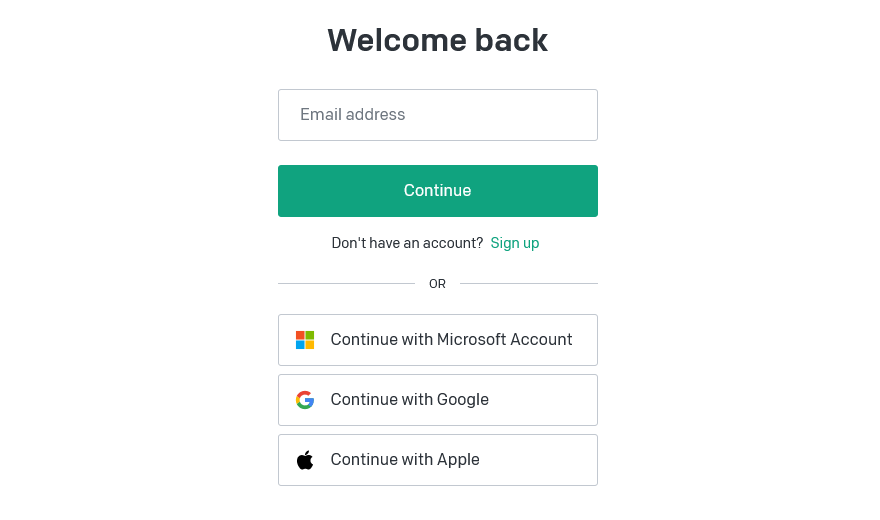

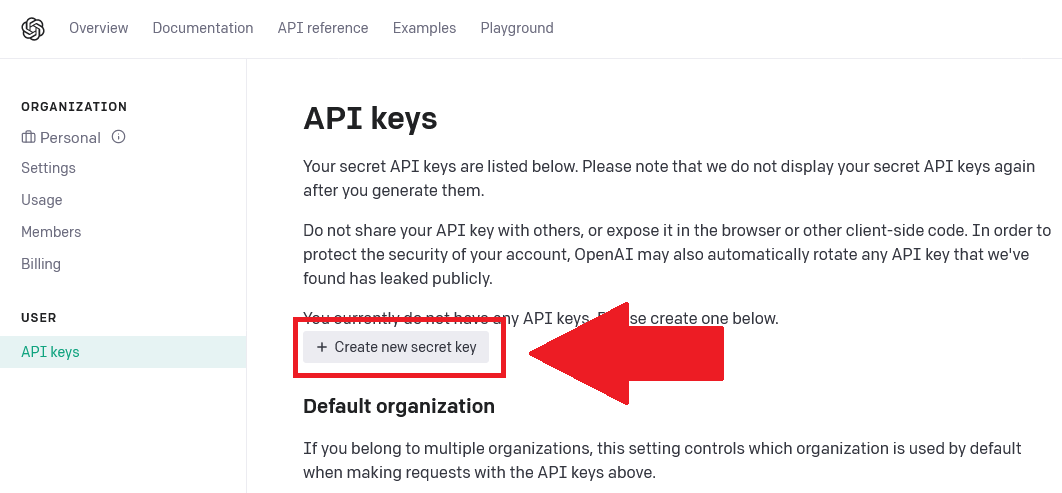

1. Visit platform.openai.com and create or sign into your OpenAI account

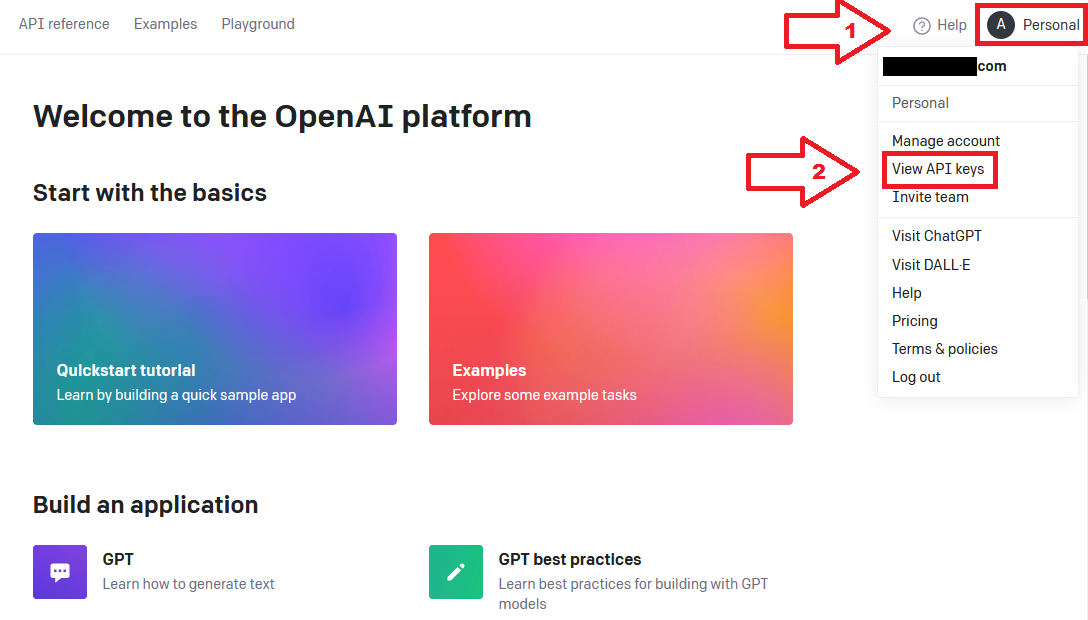

2. Click on “Personal” in the top-left corner and then choose “View API keys” from the drop-down menu

3. On the API keys page, click on the “Create new secret key” button

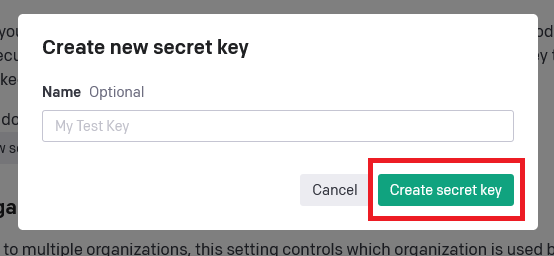

4. In the dialogue window, enter a name for your key and then click “Create secret key”

And voila! OpenAI will generate a new secret API key for you.

Make sure you save it somewhere safe because you won’t be able to view it again through your OpenAI account, and don’t forget to fill out your billing information!

You can now use the API key to integrate Instruct GPT into your custom apps. You can test the capabilities of the API via the OpenAI playground.

To learn more about the ins and outs of the OpenAI API, check out this comprehensive guide.

In the next section, we’ll take a look at some popular applications of Instruct GPT models.

Applications of InstructGPT

The capabilities of InstructGPT open up a world of possibilities for its application. Its ability to understand and follow instructions in carefully engineered text prompts makes it a versatile tool that can be used in a wide range of scenarios. Here are some of the key areas where InstructGPT is making a difference:

1. Content Generation: InstructGPT can be used to generate a wide range of content, from blog posts and articles to creative writing and even code. Its ability to follow instructions thanks to reinforcement learning and human feedback allows it to create content that is tailored to specific requirements, making it a powerful tool for content creators.

2. Customer Service: InstructGPT can be used as a customer assistant that automates customer service interactions, answers customer queries, and provides information based on human feedback. This can help businesses provide faster, more efficient customer service.

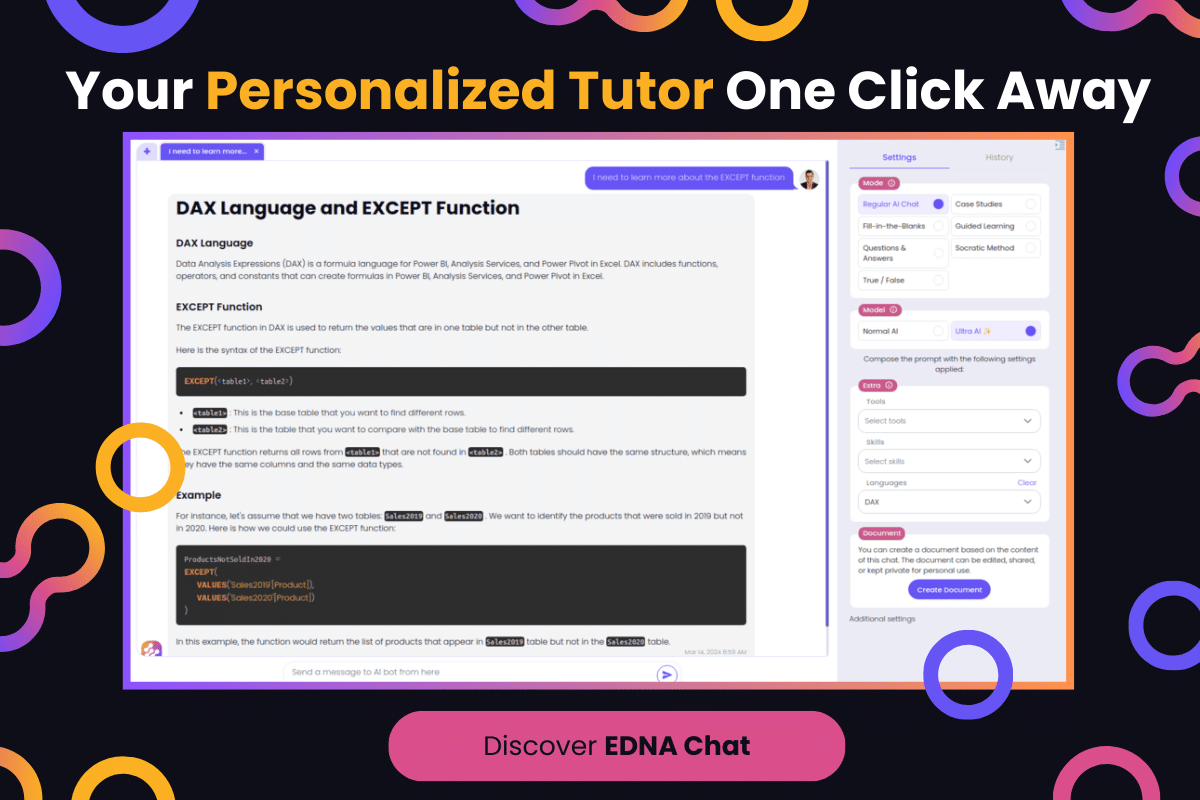

3. Education and Training: InstructGPT can be used as a teaching tool, providing detailed explanations and answers to questions on a wide range of topics. This makes it a valuable resource for students and educators alike.

4. Research: InstructGPT can be used to summarize research papers, analyze data, and provide insights, making it a useful tool for researchers.

5. Personal Assistants: InstructGPT can be used to power personal assistant apps, helping users manage their schedules, answer questions, and perform tasks based on the instructions they provide.

These are just a few examples of the many potential applications of InstructGPT using text prompts. As the technology continues to evolve, we can expect to see even more innovative uses for this powerful AI model.

The future of InstructGPT is incredibly exciting, and we’re just beginning to scratch the surface of what it’s capable of. However, the technology is not without limitations as you’ll see in the next section.

InstructGPT’s Limitations

While InstructGPT represents a significant leap forward in AI-powered language models, it’s important to acknowledge that it’s not without its limitations.

Here are a few of its limitations.

1. Truthfulness and Factuality

Although InstructGPT has shown improvements in following instructions and reductions in generating untruthful information compared to GPT-3, it’s still not perfect.

In some cases, the model may still produce responses that are not entirely accurate or factually correct. Researchers have found that InstructGPT models can make up facts less often, but the problem has not been completely eliminated.

If you use InstructGPT, then you should be aware of these limitations when engaging with the model for various language tasks.

2. Understanding User Intent

InstructGPT, like other AI models, can face challenges in understanding human intent, especially when text prompts or instructions are ambiguous.

As a result, the model might produce outputs that don’t align with the intended meaning or context of the user input. This limitation can potentially lead to inaccuracies in translation, general language tasks, and overall performance.

In the process of addressing the limitations of InstructGPT, it’s important to consider the following:

- Human evaluations: Continuous and iterative evaluation of the model by human annotators is crucial to identify and address false or misleading outputs.

- Enhancing the training process: Refining InstructGPT’s training procedure and using a more diverse dataset during model pretraining can reduce the limitations related to understanding user intent and truthfulness.

- Collaborative research: Encouraging collaboration among researchers and sharing knowledge can lead to better solutions and further fine-tune models like InstructGPT.

While these limitations present challenges, they also provide valuable direction for future research and development.

OpenAI is continually working to improve upon these limitations and enhance the capabilities of models like InstructGPT.

Despite these limitations, InstructGPT remains a powerful tool and a significant advancement in the field of AI-powered language models.

Future Prospects for InstructGPT Models

Alright, let’s talk about what’s next for InstructGPT. It’s already pretty impressive, but believe it or not, it’s just getting started. There’s a whole world of possibilities out there, and InstructGPT is gearing up to dive in headfirst.

From getting even smarter at understanding and generating text to finding its way into new and exciting fields, the future’s looking bright for this AI whizz.

1. Model Size and Performance

As InstructGPT evolves, we can expect improvements in model size and performance. The current 1.3B InstructGPT model has shown a significant improvement compared to the 175B GPT-3 model, despite having 100x fewer parameters.

This demonstrates that fine-tuning GPT-3, using RLHF, and considering user intent has a positive impact on generating more reliable outputs.

Advancements in natural language processing and neural network architecture may allow future models to have a better understanding of complex English instructions.

Moreover, they may excel at tasks like question-answering, reasoning, and text classification, which rely heavily on a model’s ability to follow instructions and provide accurate information.

2. Safety and Ethics

When developing models like InstructGPT, it’s essential to prioritize aspects like safety and ethics. By addressing concerns like bias, appropriateness, and the potential to convey harmful sentiments, developers can ensure that these models benefit users while minimizing risks.

In its current form, InstructGPT has shown a reduction in toxic output generation and improved truthfulness. However, there’s still room for expansion in areas like:

- Transfer Learning: Applying lessons learned from fine-tuning InstructGPT to other tasks, such as language translation and text completion.

- Safety Measures: Developing more robust methods to eliminate biases and decrease the generation of unsafe or harmful content.

- Few-Shot Learning: Utilizing generative pre-trained transformer architecture to handle various tasks with limited examples.

So, there you have it. The future of InstructGPT is like a sci-fi movie waiting to happen. Sure, there are some hurdles to jump and ethical puzzles to solve, but the potential is just too big to ignore.

With InstructGPT and its future siblings helping us out, we could be looking at a world where our jobs get easier, our information gets more accessible, and our creativity gets a major boost. So, here’s to the future of InstructGPT – it’s going to be one heck of a ride!

Final Thoughts

InstructGPT, with its ability to follow instructions and generate impressively human-like text, is not just a testament to the rapid progress being made in AI but also a beacon illuminating the path forward.

From its roots in the evolution of models like GPT-1, GPT-2, and GPT-3, to its current capabilities and potential applications, InstructGPT is a true game-changer. It’s reshaping how we interact with technology and opening up new possibilities for automation.

The best part? We’re just at the beginning of this exciting journey, and it’s a journey we’re thrilled to embark on. The future is here, and it speaks our language!

If you’d like to know more about how you can integrate AI language models into your workflow, check out our video on how to integrate ChatGPT into Outlook using Power Automate: